Ethical Considerations of AI in Healthcare: Striking a Balance between Innovation and Privacy

As a healthcare executive, you’re likely excited about the promise of artificial intelligence to drive innovation in care delivery, reduce costs, and improve outcomes. However, you also recognize that the use of AI in healthcare raises ethical questions that must be addressed. How can you implement innovative AI solutions while safeguarding patient privacy and building trust? Achieving the right balance requires diligence, partnership, and a commitment to responsible progress.

On October 30th, 2023 US President Joe Biden issued an Executive Order on AI that aims to establish standards for AI safety and promote innovation and trust in AI. This new US policy also requires companies using AI systems to share their testing results and safety measures, emphasizing transparency and accountability. At Fusemachines, we strongly endorse this move, which enhances trust and supports responsible AI adoption in healthcare.

Healthcare leaders should thoughtfully evaluate how AI tools can enhance their work, then implement them responsibly in partnership with trusted technology providers. They must also ensure AI systems are fair, unbiased and inclusive. With prudent oversight and governance, AI can improve healthcare quality, access and affordability.

Key Ethical Considerations for AI in Healthcare

To leverage AI in healthcare responsibly, leaders must consider key ethical factors.

Privacy and Data Security

AI systems rely on large amounts of patient data. Privacy and security are hence paramount. Healthcare providers must obtain proper consent before collecting and using patient data, allowing patients to opt out of data sharing if desired. Strict data security measures, encryption, and access controls should be implemented to prevent unauthorized access. Partnering with trusted technology companies that build security and privacy into their AI solutions from the start can help reduce risk.

Bias and Fairness

AI systems can reflect and even amplify the biases of their human creators. Healthcare providers must ensure AI systems are fair, unbiased, and don’t discriminate against groups of people. AI training data should be representative of diverse populations. AI systems should be monitored once deployed to quickly detect and mitigate any emerging biases.

Transparency and Explainability

AI technologies should be transparent and explainable. Healthcare providers should understand how AI systems work and be able to explain their decisions and recommendations clearly to patients and providers. Opaque “black box” systems that cannot be explained may not be suitable for deployment in healthcare.

Human Oversight and Judgment

Human physicians and healthcare workers should maintain oversight over any AI systems deployed. AI should be viewed as a tool to augment human capabilities, not replace them. Healthcare providers must ensure physicians and clinicians remain involved in the delivery of care. With appropriate safeguards and oversight in place, AI can be implemented responsibly and for the benefit of all.

Click here to explore the how Fusemachines transforms healthcare businesses with its expert AI solutions. To schedule a complimentary consultation today, click here.

Maintaining Patient Privacy With Explainable AI

To fully realize the benefits of AI in healthcare while protecting patient privacy, organizations must build AI systems with oversight, accountability, and transparency in mind. Explainable AI that provides insight into how algorithms reach their conclusions is key.

Transparency and Oversight

With AI increasingly used as a clinical decision support system to analyze patient data and suggest diagnoses or treatment plans, healthcare providers must demand transparency into how AI systems work. AI systems should be designed to provide details on the data used to develop the algorithms, the weights given to different variables, and how the AI reaches each conclusion.

Oversight committees should review AI systems before implementation to determine how patient data is collected, used, and protected. They must ensure personally identifiable information is kept private and only used to improve patient care. Regular audits of AI performance, data use, and privacy safeguards are also needed. Partnerships with trusted technology companies like Fusemachines focused on privacy and ethics can help healthcare organizations deploy AI responsibly.

Accountability

When AI is used in healthcare, accountability is key. Healthcare providers remain responsible for patient care and oversight of any AI tools used. They must understand how to properly use AI to support clinical decisions without overreliance on algorithms. Patients should also understand when and how AI is used in their care so they can ask questions and provide fully informed consent.

If an AI system proves inaccurate or inappropriate for clinical use, it must be addressed immediately. Healthcare organizations need processes to report issues, suspend or terminate use of the AI, notify any patients affected, and make corrections to the algorithms and data as needed. With vigilance and a commitment to ethics, healthcare organizations can benefit from innovative AI solutions while upholding privacy. But they must demand AI that is transparent, provide oversight and accountability, and put patients first.

The Exciting Future of Ethical AI Innovation in Healthcare

The future of AI in healthcare is exciting and promising. With proper safeguards and oversight in place, AI can transform how care is delivered and improve patient outcomes.

Optimizing Care Pathways

AI has the potential to analyze huge amounts of data to better understand treatment protocols, identify optimal care pathways, and reduce waste and inefficiency. For example, AI could review millions of electronic health records and clinical trial data to determine the most effective sequence of treatments for a given condition based on a patient’s unique attributes. Physicians could then customize care plans based on these AI-generated insights.

Enhancing Diagnostics

AI shows promise for improving the accuracy of medical imaging and diagnostics. Machine learning algorithms can detect subtle patterns in scans and tests that humans may miss. For instance, AI systems are better able to identify signs of diabetic retinopathy, cancerous lesions, and other health issues in their early stages. With further development, AI could help physicians diagnose diseases more quickly and precisely, allowing for earlier treatment. AI is already being used to identify the risk of multiple diseases in non-clinical settings. AI systems analyze structured and unstructured data to detect undiagnosed diseases which helps in closing the gaps in care.

Personalizing Treatment

In the future, AI may help personalize medicine by generating tailored treatment recommendations for each patient based on their genetics, lifestyle, environment, and health history. However, implementing truly personalized care will require strong privacy protections, transparency, and patient consent regarding how personal data is collected and used to generate insights. Healthcare organizations must establish data governance policies and only partner with trusted technology vendors to ensure AI systems are aligned with ethical values.

Click here to explore the how Fusemachines transforms healthcare businesses with its expert AI solutions. To schedule a complimentary consultation today, click here.

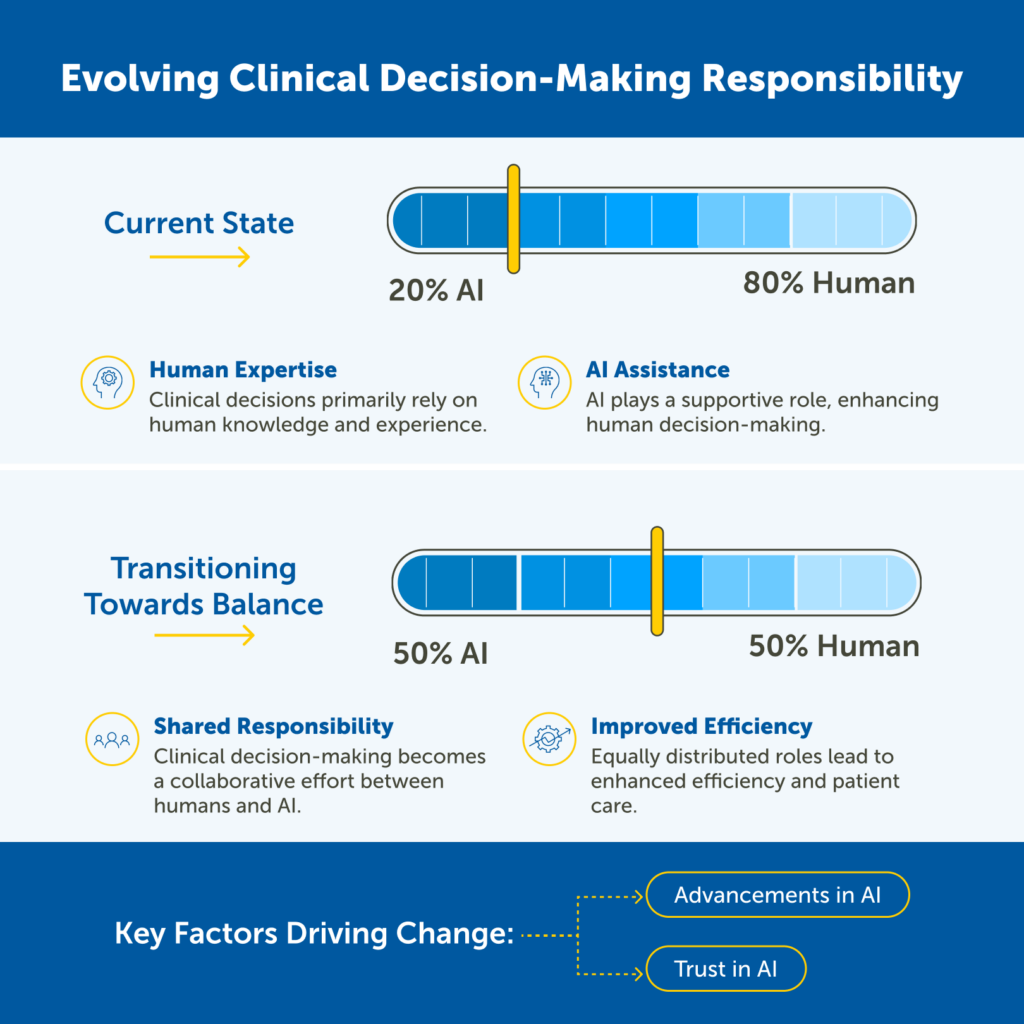

Evolving Role of AI in Clinical Decision Making

The role of AI in clinical decision making is evolving rapidly. Currently, AI is used primarily in an assisting role, providing clinicians with information and recommendations to help them make decisions. However, in the near future, it is expected that AI and humans will share responsibility for clinical decisions on a more equal basis.

There are several factors driving this evolution. First, AI models are becoming increasingly sophisticated and accurate. This is due to advances in machine learning algorithms and the availability of large datasets of medical data. Second, clinicians are becoming more comfortable using AI in their practice. This is due to a growing awareness of the potential benefits of AI, as well as the increasing availability of user-friendly AI tools.

The shift to shared responsibility between AI and humans will have a number of implications for clinical decision making. First, it will allow clinicians to focus on more complex and nuanced decisions, while leaving more routine tasks to AI. Second, it will lead to more personalized and individualized care, as AI can be used to tailor treatments to the specific needs of each patient. Third, it will improve the efficiency and quality of care, as AI can help to identify and address potential problems early on.

The possibilities for improving care with AI are vast but must be balanced with privacy, ethics and human oversight. By establishing principles of responsible innovation, the healthcare industry can harness the power of AI to enhance care quality, improve outcomes and reduce costs for all. But human physicians, not machines, should take ultimate responsibility for treatment decisions. With prudent management and safeguards in place, AI can make healthcare more accurate, efficient and personalized.

Partnering With an AI Company like Fusemachines

To fully realize the benefits of AI in healthcare while still upholding strict privacy standards, healthcare organizations must choose their technology partners wisely. Partnering with a reputable AI company that prioritizes data privacy and ethical practices is key.

Experience and Expertise

Look for an AI company with experience in the healthcare industry and a proven track record of success. They should employ data scientists and engineers with expertise in healthcare data and a dedication to privacy and ethics. An ideal partner will have experience handling sensitive health data and implementing strong privacy controls. They should be up to date with healthcare data regulations and willing to help your organization achieve compliance.

Values and Commitment to Ethics

The company you choose should demonstrate a strong commitment to ethics and share your organization’s values around privacy, trust, and beneficence. They should have a code of ethics that governs how they develop and deploy AI systems. It’s important that they consider the ethical implications of their technologies and how they could negatively impact patients. An ethical company will be transparent about how patient data is used and stored. They will also build privacy safeguards and oversight into their AI systems.

Continuous Monitoring and Improvement

Partnering with an AI company is not a set-it-and-forget-it scenario. They should continuously monitor AI systems for accuracy, privacy, and ethical issues, making improvements as needed. Your organization should maintain open communication with the company and stay up to date on their privacy practices and policies.

By choosing an AI partner committed to ethics, privacy, and innovation, healthcare organizations can feel confident implementing advanced technologies while still protecting patient trust. With diligent oversight and monitoring, the partnership can enable safe and ethical progress.

Click here to explore the how Fusemachines transforms healthcare businesses with its expert AI solutions. To schedule a complimentary consultation today, click here.

Bottom Line

AI and machine learning have the potential to transform healthcare in remarkable ways, improving diagnostics, personalizing treatment, and streamlining processes. However, with these benefits comes significant responsibility. Healthcare organizations must ensure AI systems are rigorously tested for accuracy and bias, patient data is kept private and secure, and people remain involved in and informed about AI-assisted decision making about their health.

If healthcare leaders are proactive, transparent, and put patients first when implementing AI, the rewards can be life-changing while still upholding ethical standards. The future is bright if we’re thoughtful about how we get there.

Click here to explore the how Fusemachines transforms healthcare businesses with its expert AI solutions. To schedule a complimentary consultation today, click here.