Supercomputing, 5G, & Enterprise AI: Takeaways from Nvidia’s 2021 Conference

Nvidia’s GPU Technology Conference (GTC) 2021 delivered the latest breakthroughs in AI, HPC (high-performance computing), data science, graphics, gaming technology, healthcare, cloud, 5G, industrial, automotive and more. CEO and founder Jensen Huang kicked off the keynote with exciting announcements such as Nvidia’s plans to become a three-chip company with new Arm-based data center CPU, setting up its omniverse, quantum cue, and more.

The week-long event featured 1,600 talks facilitated by a number of expert panelists and leaders. They discussed and presented some of the latest work in COVID-19 research, data science, cybersecurity, computer graphics, and recent advancements in AI and robotics.

Softwares will be written by software running on AI computers. – Jensen Huang, CEO, Nvidia

Here are 5 key takeaways from the conference.

Nvidia announces omniverse enterprise

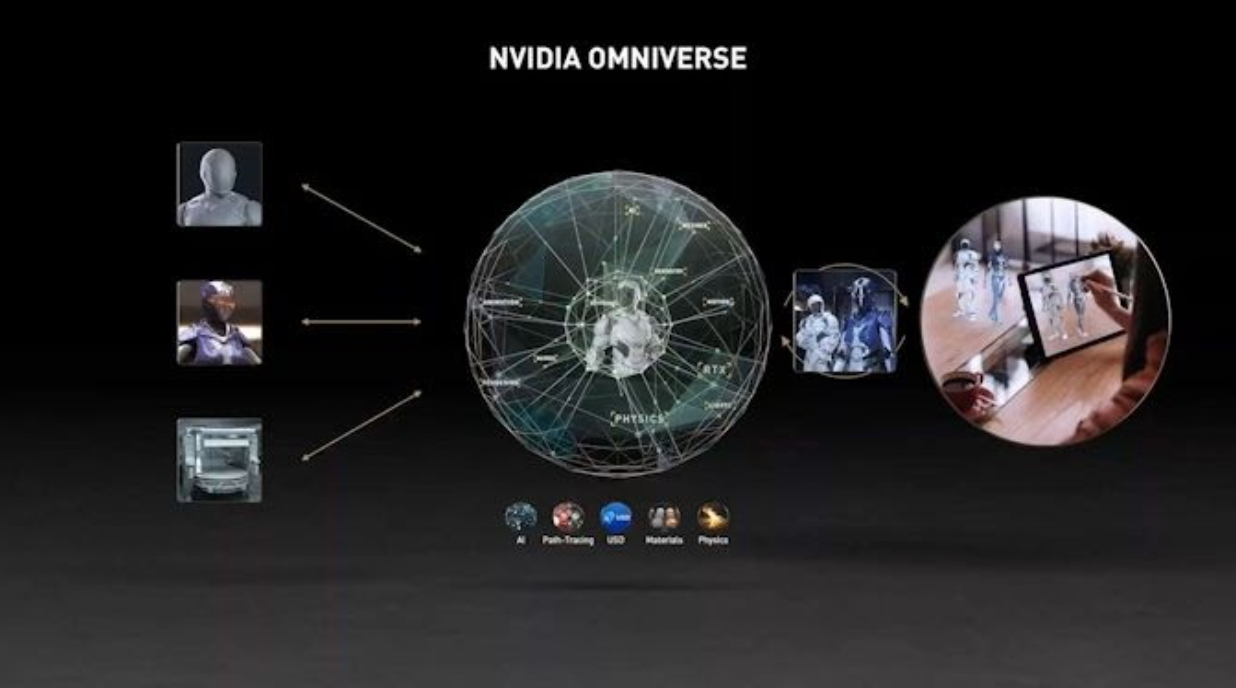

After opening the Omniverse design and collaboration platform for open beta in December, Nvidia is now announcing Omniverse Enterprise. Omniverse is a shared group simulation and graphics software package fully path traced with physics simulated by Nvidia PhysX, materials with Nvidia MDL integrated with Nvidia AI.

Jensen mentioned the company’s next mission to create a metaverse, an artificially built environment where companies simulate the future, a virtual world that is thousands of times larger than the real world which Jensen described as the “digital twin” of every single factory, building, car, city, and person. Nvidia has already started using the 3D modeling platform for remaking factories and machinery. Computer makers globally are building Nvidia certified workstations, notebooks, and servers optimized for Omninverse. Nvidia has even created a digital twin of a BMW factory!

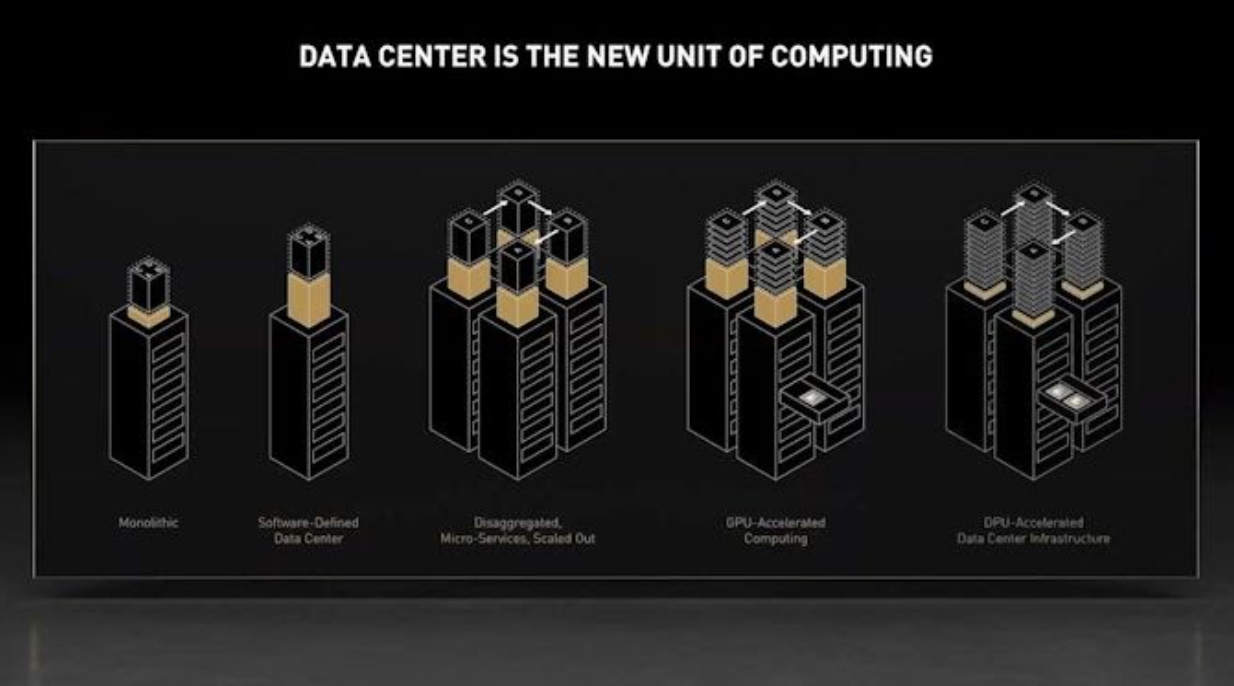

Launch of data center CPU, Grace

Jensen announced that Nvidia has set up its first data center CPU called Grace. With more than 2.400 CPU SPECint rate performances from an eight-GPU DGX, each CPU delivers 300 SPECint. The chip-maker has also introduced the first-ever accelerated AI-and computer-based DPU Bluefield 3, which enables organizations to develop industry-leading software and data center safety with a 400Gbps network processor with 22 billion transistors. Bluefield offloads a large number of network functions and all the processing they provide, such as SSL and security review.

AI and 5G are the ingredients to kickstart the 4th industrial revolution. – Jensen Huang, CEO, Nvidia

AI and 5G will fuel next wave of IoT services

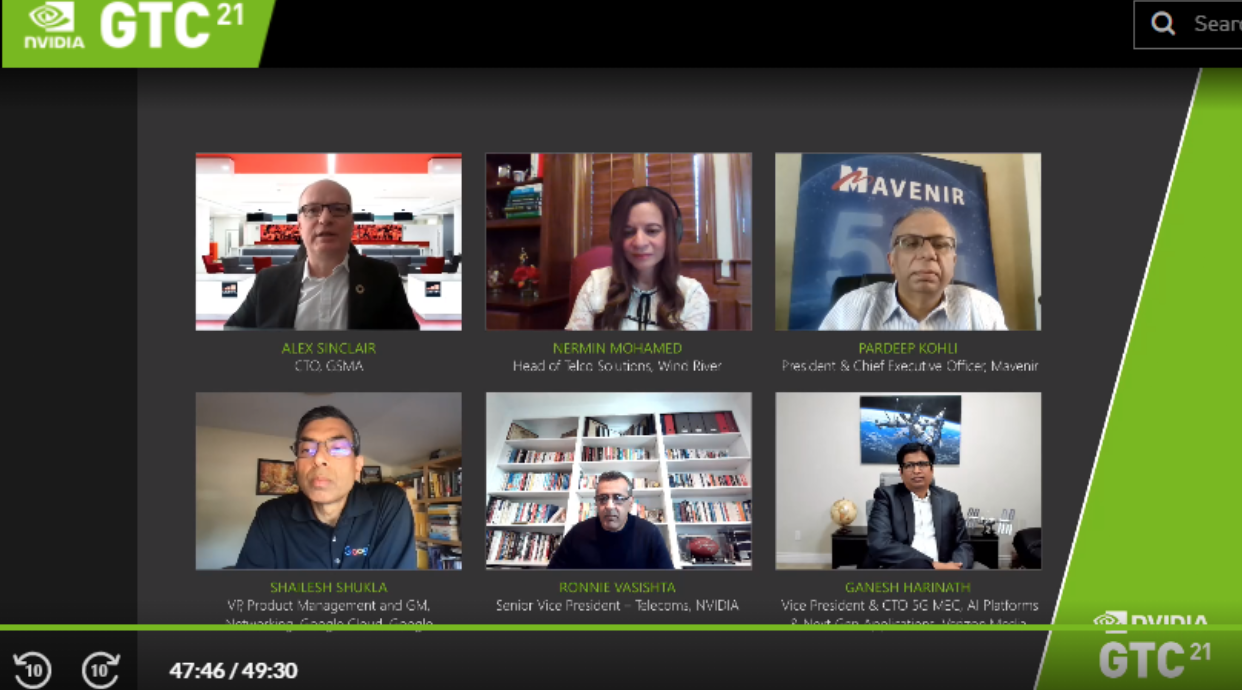

A panelist of 5 experts discussed how 5G and AI will fuel the next wave of IoT services. Executives from Verizon, Wind River, Mavenir, Google, and Nvidia expressed their thoughts on the effect of 5G on the edge AI services. They brainstormed how the next generation of AI and 5G applications will enhance phone calls and call signals.

Nermin Mohamed, head of telco solutions at Wind River, said that 5G, AI, and edge computing are “the three magic words that will propel the digital connected world.” With the advent of 5G and AI, there is an opportunity to reshape the broader telecom infrastructure and the edge industry by doing something very similar to what was done with Google and Android, as stated by Shailesh Shukla of Google Cloud.

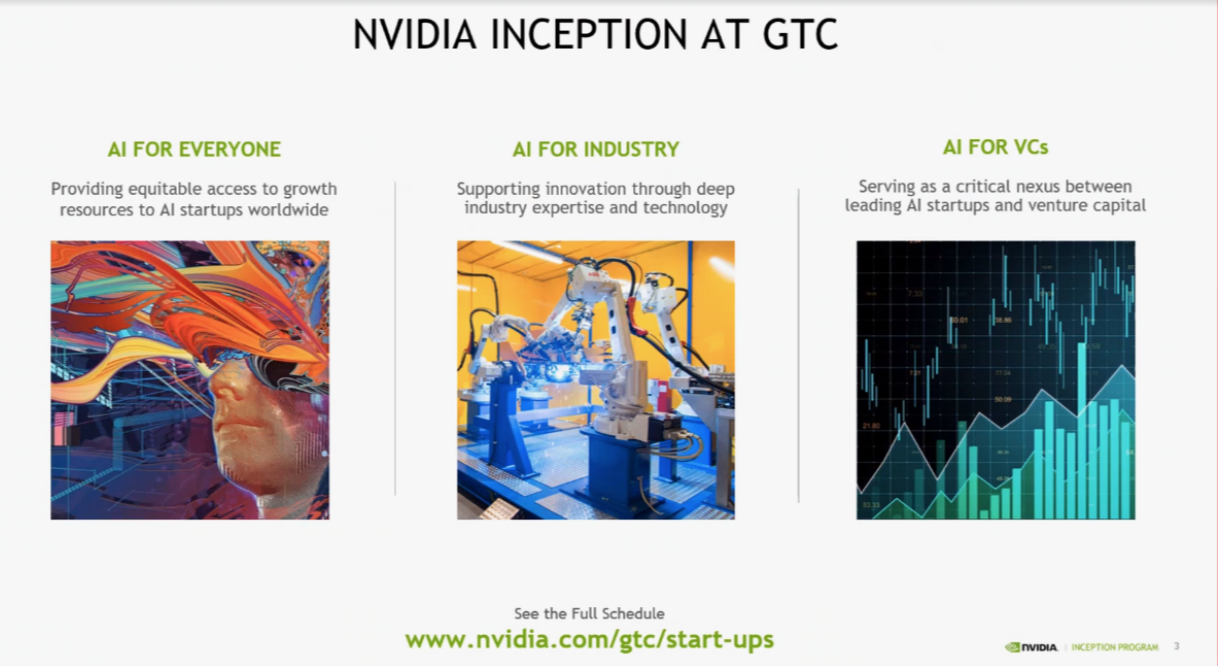

AI startups: Nvidia’s Inception and Insights

In this interactive session, panelists discussed efforts to nurture and inspire the broadest possible cohorts of AI startups. The three major tracks for Nvidia’s inception are AI for Everyone, AI for Industry, and AI for Venture Capitals. Herbst explained how GTC is planning to provide equitable access to growth resources to AI startups and support innovation through industry expertise and technology. The panelists also discussed healthcare, agriculture, and media that will be highly disrupted by AI.

MLOps, Enterprise AI and cuQuantum

Nvidia’s EGX venture platform allows both existing and current data intensive applications to be accelerated and secured on a similar infrastructure. It offers the power of accelerated computing from the server center to edge with a scope of optimised hardware, a simple to deploy application, management software, and an ecosystem of partners who offer EGX. Huang gave a brief look at systems that embody the whole work process to customize AI models which applies transfer learning to your data to calibrate models.

No one has all the data – sometimes it’s rare, sometimes they are trade secrets. No model can be trained for every skill. And the specialized ones are the most valuable,” said Huang as he introduced Nvidia TAO.

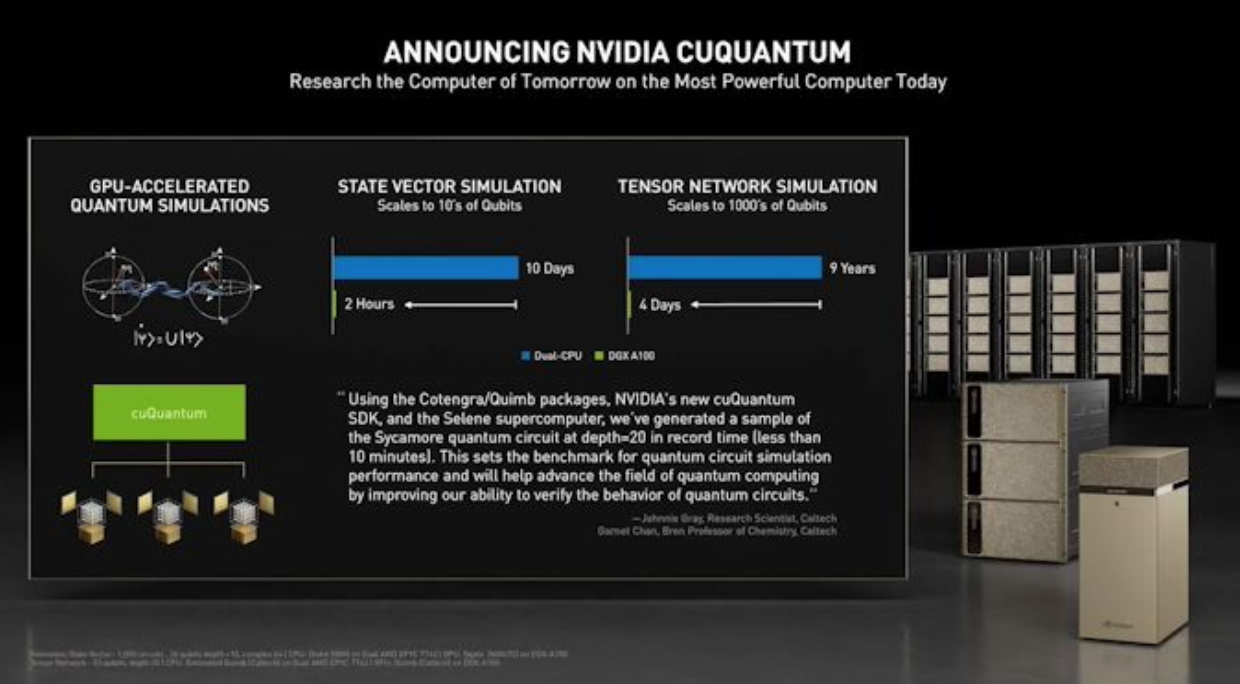

Nvidia likewise declared its new programming bundle cuQuantum. It is an acceleration library intended for mimicking quantum circuits, designed for both tensor organization solvers and state vector solvers. It scales to enormous GPU memories, various GPUs, and different DGX hubs. Engineers can go through it to speed quantum circuit reproductions dependent on state vector, density framework, and tensor network methods by significance level.

From making GPU based video games to leading groundbreaking innovations in data science, AI and computing, Nvidia is leading the fourth industrial revolution. After years of innovation and experimentation, Nvidia launched “Grace,” the company’s 1st data center CPU. Grace will use NLP processing, recommendation systems, and AI supercomputing to tap large datasets. After opening the Omniverse design and collaboration platform in December, the company is developing its Omniverse Enterprise with over 400 major companies already using it. With Blue-field3, the company is facilitating real-time network visibility, and detection of cyberthreats for multiple organisations and with many other innovations such as autonomous vehicles, new SDK for quantum circuit simulation and more that’s yet to disrupt AI and technology.